How does a car benefit from having Deepseek installed ?

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Artificial Intelligence thread

- Thread starter 9dashline

- Start date

I can think of one, you have an accident, you get knocked out. The car sensing its upside down and something is not right or something calls emergency services and Deepseek takes over to get some help to you.How does a car benefit from having Deepseek installed ?

Big news! Qwen 3 is here!

A lot of models, all open sourced!

Among them an impressive 235B parameter MoE model (same model family like DeepSeek)

Trained on 36T tokens! That's impressive, the biggest token budget among opensource models (closed ones don't publish this info)

A lot of models, all open sourced!

Among them an impressive 235B parameter MoE model (same model family like DeepSeek)

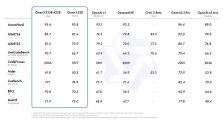

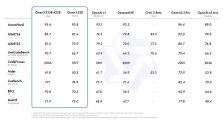

Today, we are excited to announce the release of Qwen3, the latest addition to the Qwen family of large language models. Our flagship model, Qwen3-235B-A22B, achieves competitive results in benchmark evaluations of coding, math, general capabilities, etc., when compared to other top-tier models such as DeepSeek-R1, o1, o3-mini, Grok-3, and Gemini-2.5-Pro. Additionally, the small MoE model, Qwen3-30B-A3B, outcompetes QwQ-32B with 10 times of activated parameters, and even a tiny model like Qwen3-4B can rival the performance of Qwen2.5-72B-Instruct.

Trained on 36T tokens! That's impressive, the biggest token budget among opensource models (closed ones don't publish this info)

While Qwen2.5 was pre-trained on 18 trillion tokens, Qwen3 uses nearly twice that amount, with approximately 36 trillion tokens covering 119 languages and dialects.

Baba moving faster than deepseek. I think deepseek's edge is gone now that all the big chinese techs are moving aggressively on AI. Deepseek can only keep up if they get huge funding boost. Otherwise just having 200 really good employees will not cut it.Big news! Qwen 3 is here!

View attachment 150838

A lot of models, all open sourced!

Among them an impressive 235B parameter MoE model (same model family like DeepSeek)

Trained on 36T tokens! That's impressive, the biggest token budget among opensource models (closed ones don't publish this info)

Qwen 3 scores are unreal. I mean the flagship model is better than o3-mini in reasoning, that's crazy.

But even more crazy is 4B model being as good as 72B model from Qwen2.5, which itself was as good as Llama3-70B model. So yeah, that's ridiculous.

Gemini 2.5 pro is still better though (not sure about o3 since it seems to perform better in bench marks than reality) and multi modal as well. Google is already starting to train their next model and no one has been able to beat them on context length. Competition is getting heated & winners are starting to emerge, still hoping for a Deep Seek upset because Google is looking invincible right now.Baba moving faster than deepseek. I think deepseek's edge is gone now that all the big chinese techs are moving aggressively on AI. Deepseek can only keep up if they get huge funding boost. Otherwise just having 200 really good employees will not cut it.

It's important to note that Qwen3 was never aiming for SOTA, since a 235B model can’t compete with models several times its size, such as o3 and 2.5-pro. They’re focusing on building extremely efficient models for local use. In that sense, DeepSeek and Qwen complement each other very well, with DeepSeek focusing on large SOTA models like V3 (currently the top non-reasoning model), while Qwen focuses on leading in small, efficient models that can be run locally.

It would be a waste of resources for Qwen to release a SOTA model that briefly surpasses o3/2.5-pro, only to be overtaken once R2 launches. This release proves that China is increasingly dominating efficiency at the 0.6B to 235B scale with Qwen3, and is now the undisputable leader in open-source AI across all model sizes. Qwen3 has made massive progress in efficiency and quite surpasses expectations.

It would be a waste of resources for Qwen to release a SOTA model that briefly surpasses o3/2.5-pro, only to be overtaken once R2 launches. This release proves that China is increasingly dominating efficiency at the 0.6B to 235B scale with Qwen3, and is now the undisputable leader in open-source AI across all model sizes. Qwen3 has made massive progress in efficiency and quite surpasses expectations.

Honestly it doesn't seem very productive just to spend loads of money making a model that will look good on the benchmarks for about two months and then it gets overtaken by someone else who releases a newer model. There needs to be more focus on utility.It's important to note that Qwen3 was never aiming for SOTA, since a 235B model can’t compete with models several times its size, such as o3 and 2.5-pro. They’re focusing on building extremely efficient models for local use. In that sense, DeepSeek and Qwen complement each other very well, with DeepSeek focusing on large SOTA models like V3 (currently the top non-reasoning model), while Qwen focuses on leading in small, efficient models that can be run locally.

It would be a waste of resources for Qwen to release a SOTA model that briefly surpasses o3/2.5-pro, only to be overtaken once R2 launches. This release proves that China is increasingly dominating efficiency at the 0.6B to 235B scale with Qwen3, and is now the undisputable leader in open-source AI across all model sizes. Qwen3 has made massive progress in efficiency and quite surpasses expectations.

escobar

Brigadier

Seeking the next DeepSeek: What China’s generative AI registration data can tell us about China’s AI competitiveness

Against that backdrop, I think it’s a mistake to view DeepSeek as some kind of anomaly, rising out of otherwise fallow ground. China’s generative AI industry – both those exploring foundational models and those releasing consumer-facing apps – is varied and vibrant, with many new competitors entering the fray on a monthly basis. Given this environment, DeepSeek was more of an inevitability than an anomaly, and China very well may give rise to more impactful foundational LLMs in the not-too-distant future.

They are not making this models "to look good" in benchmarks. For start the models are impressive for their size, from my tests they are pretty good. The models are made with efficiency in mind, Qwen3-30B-A3B is as good as a 70B model but can run in a CPU at a good speed. Qwen3-235B-A22B is as good as Claude and openAI <in my experience> but can fit 4bit quantized in 160GB RAM or 4 H100 unquantized and run at a decent speed. This models are made to be run as cheap as possible. Another thing that the Qwen team is focusing with this release is tool calling and agentic modes as these models seem to be pretty good at.Honestly it doesn't seem very productive just to spend loads of money making a model that will look good on the benchmarks for about two months and then it gets overtaken by someone else who releases a newer model. There needs to be more focus on utility.

I dont think they have illusions that the model will be SOTA for long time that is why they release the models in a Apache license instead of the typical Qwen license because these models seem to be a snipped of what they want for future models, Cheap to run highly intelligent models with good agentic capabilities, although is possible that they will release a even bigger model for their paid chat.

The Qwen 3 coder is going to be a beast given how good this models perform